This article data science blogthon.

The term “machine learning” was coined by IBM’s Arthur Samuel. Machine learning is part of artificial intelligence. Machine learning is pro earnings.

- supervised learning

- unsupervised learning

- semi-supervised learning

- reinforcement learning.

Machine learning uses mathematical and statistical methods such as OCR, spam detection, etc.

sauce: fs.ac.in/blog/

In this post, we will use the Book-My-Show dataset and apply three machine learning models to analyze which model is a good fit for this dataset.

affirmation of the problem

Book-My-Show has identified an issue that needs resolution. By allowing and displaying advertisements on its website, Book-My-Show raises concerns about users’ privacy and the information they access. The displayed advertisements may contain links intended to trick certain users into installing malicious programs, locking their computers, or exposing the user’s privacy. , can be devastating. Book-My-Show wants to analyze certain URLs to determine if they are vulnerable to phishing and fix the problem.

To tackle the Book-My-Show problem, the model works best with Logistic Regression, KNeighbors, and XGB.

About Book-My-Show datasets

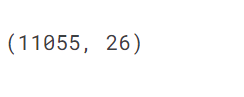

11k samples correspond to 11k URLs in the input dataset. Each sample has a different description of the URL with different values. If the URL falls within the -1 (suspicious), 0 (phishing), or 1 (legitimate) range, the URL may be a legitimate or phishing link.

Implementation

The practical ones are implemented in Kaggle. You can access the datasets and notebooks from the following links. The book-my-show notebook link is my kaggle.com account.

Dataset link: https://www.kaggle.com/datasets/shibumohapatra/book-my-show

Notebook link: https://www.kaggle.com/code/shibumohapatra/logical-regression-kneighbors-xgb

Module and library information

-

Numpy: linear algebra

- Pandas: data processing like pd.read_csv

- Matplotlib: plotting graphs

- Seaborn: Visualization of random distributions and heatmaps

- xgboost: a library that imports the xgbclassifier module

- Sklearn: Machine learning tools and statistical modeling. Sklearn has many modules used for machine learning and they are mentioned below –

- Sklearn.model_selection: Split the data into random subsets of training and test sets.

- Cross_val_score: Evaluate model performance by handling result set distribution problems. Evaluate the data and return a score.

- KFold: Split the data into K folds to avoid overfitting.

- GridSearchCV: cross-validation to extract optimal parameter values for making predictions

- StratifiedKFold: for stratified sampling (split into subgroups by gender, race, etc.)

- Sklearn.linear_model: Import a machine learning model

- Sklearn.neighbors: imports the KNeighbors Classifier module

- Sklearn.metrics: for determining predictions and accuracy scores, confusion matrices, ROC curves, AUC

import numpy as np import pandas as ad import matplotlib.pyplot as plt %matplotlib inline import seaborn as sns from sklearn.model_selection import train_test_split,cross_val_score from sklearn.metrics import accuracy_score,confusion_matrix,classification_report from sklearn.linear_model import LogisticRegression from sklearn.neighbors import KNeighborsClassifier from xgboost import XGBClassifier,cv from sklearn.metrics import roc_curve, auc from sklearn.model_selection import KFold from sklearn.model_selection import cross_val_score, LeaveOneOut from sklearn.model_selection import GridSearchCV, StratifiedKFold

Exploratory Data Analysis (EDA)

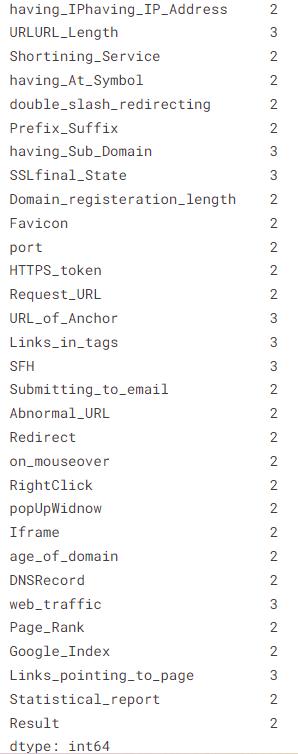

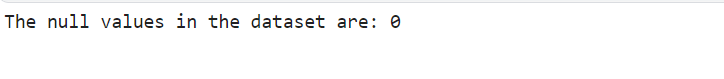

You should explore your data using heat maps and histograms. The number of samples in the data and unique elements in all features are determined. I need to check if any of the columns have null values.

df_data = df_data.drop('index',1)

df_data.nunique()

#check for NULL value in the dataset

print("The null values in the dataset are:",df_data.isnull().sum().sum())

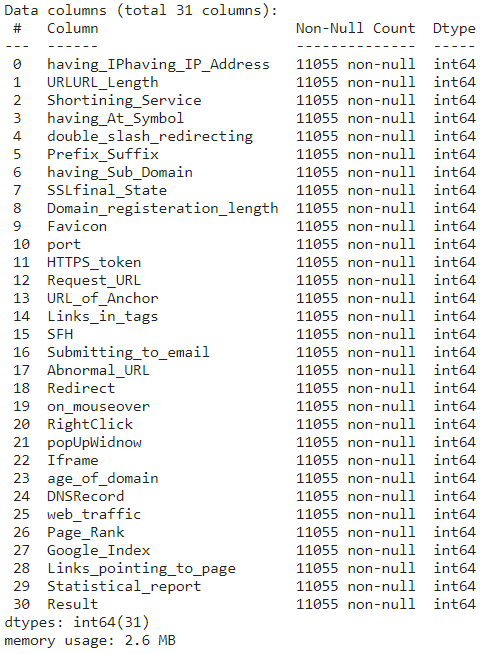

# NULL value check df_data.info()

# Duplicate f_data.T

print("The duplicate values in dataset is:",df1.duplicated().sum())

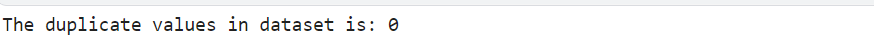

Plot Histograms for Data Exploration

df_data.hist(bins=50, figsize=(20,15)) plt.show()

The distribution of the data is shown in the histogram above. -1, 0, and 1 are values.

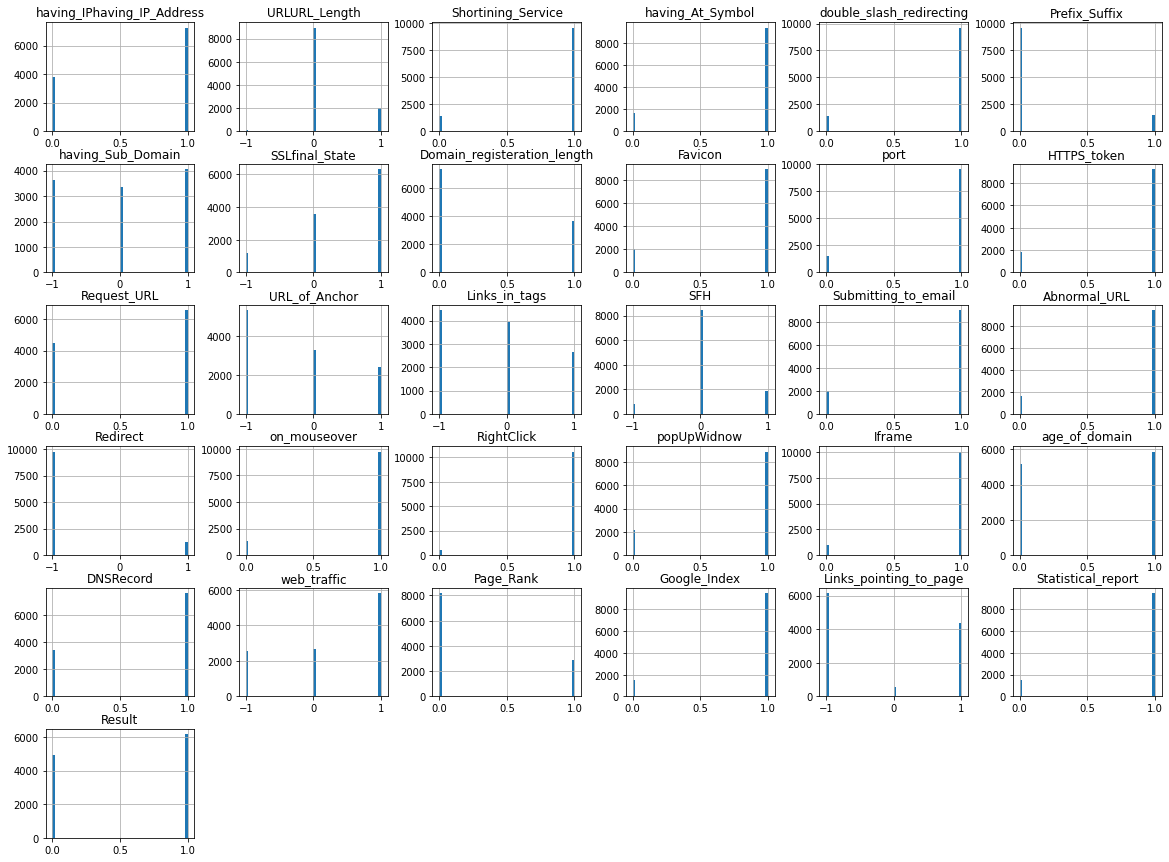

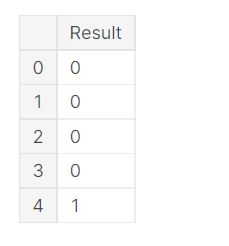

pd.value_counts(df_data['Result']).plot.bar()

The distribution of legitimate (1) and phishing (0) URLs is shown in the chart above.

Correlating Features and Feature Selection

I need to find out if there are any correlated features in the data and remove those features that may be correlated with the threshold.

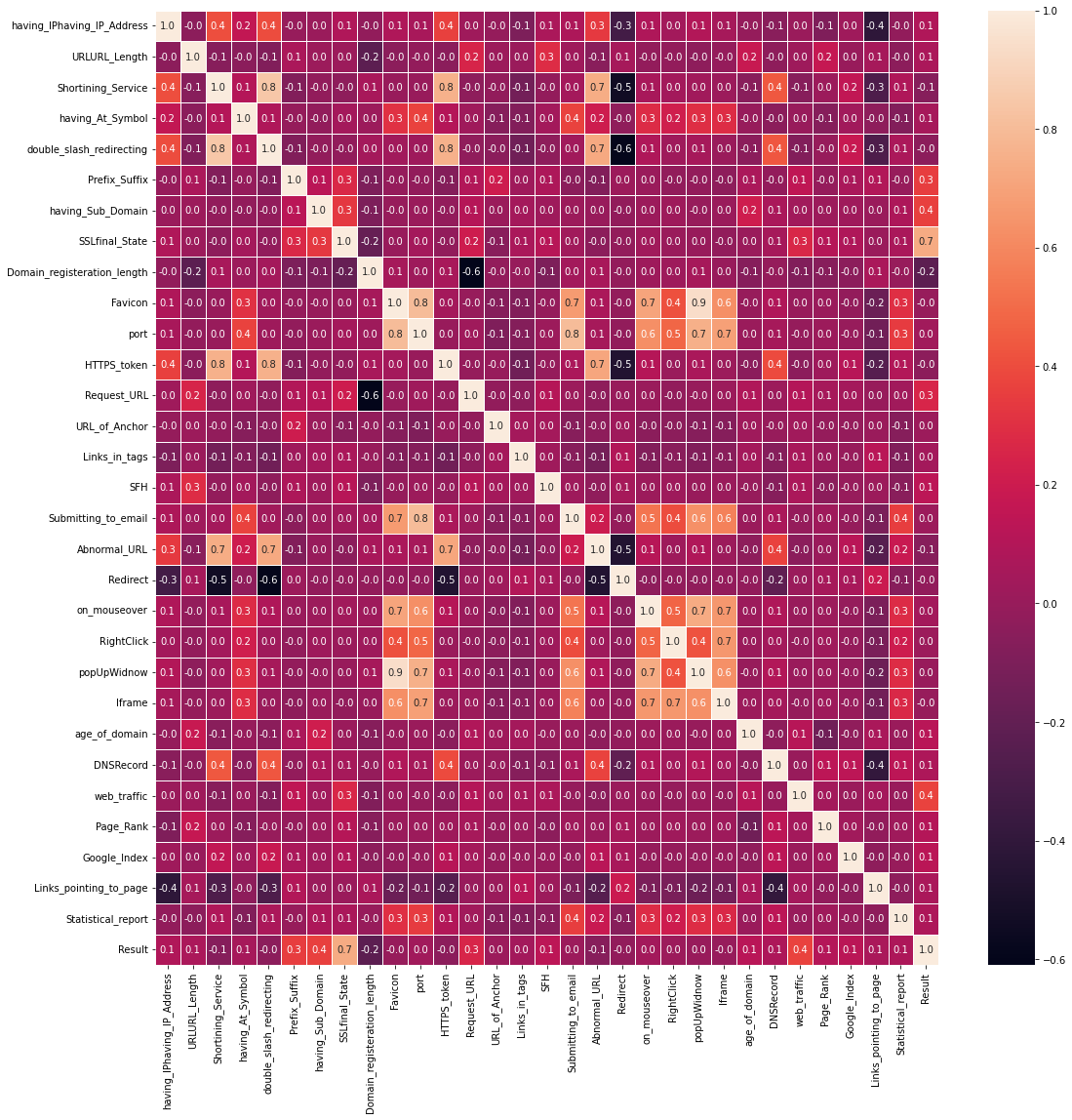

#correlation map f,ax=plt.subplots(figsize=(18,18)) sns.heatmap(df_data.corr(),annot=

The above heatmap output ‘popUpWindow’ and ‘Favicon’ have a strong correlation of 0.9. It suggests data redundancy. So we need to remove one of the columns.

There is a correlation between ‘popUpWindow’ and ‘Favicon’ in the heatmap output. There are 9. It suggests that there are multiple data sources. I need to remove one of the columns. I need to find a highly correlated independent variable and drop the column. The threshold was set at 0.75. Columns with correlation values greater than 0.75 are removed.

cor_matrix = df_data.corr().abs() upper_tri=cor_matrix.where(np.triu(np.ones(cor_matrix.shape),k=1).astype(np.bool))

# threshold greater than 0.75 to_drop = [column for column in upper_tri.columns if any(upper_tri[column] > 0.75)] print(to_drop)

#drop the columns which are highly correlated df_data.drop(to_drop,axis=1,inplace=True) df_data.shape

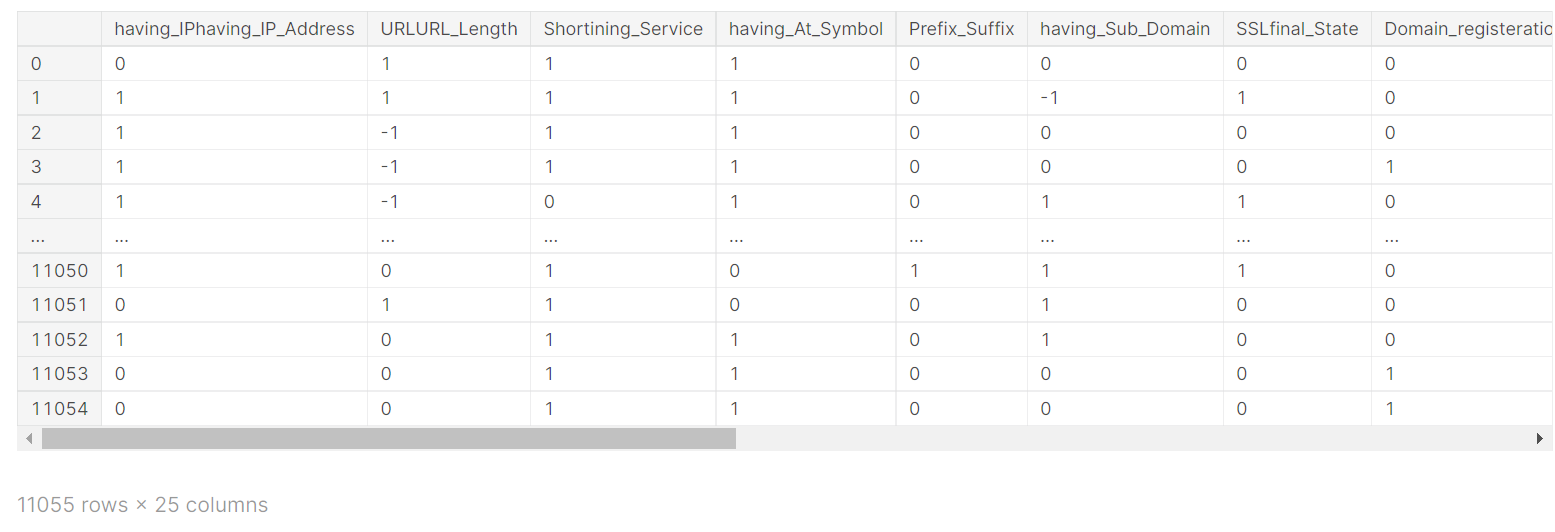

X=df_data.drop(columns="Result") X

Y=df_data['Result'] Y= pd.DataFrame(Y) Y.head()

Here we split the data into a training set and a test set.

# split train - test to 70-30 train_X,test_X,train_Y,test_Y = train_test_split(X,Y,test_size=0.3, random_state=9)

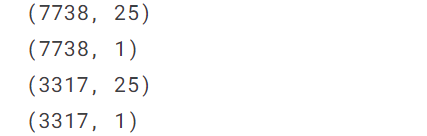

print(train_X.shape) print(train_Y.shape) print(test_X.shape) print(test_Y.shape)

classification model

With a clear understanding of your data, you can build a classification model. Classification models were first developed to identify malicious URLs.

#model build for different binary classification and show confusion matrix

def build_model(model_name,train_X, train_Y, test_X, test_Y):

if model_name == 'LogisticRegression':

model=LogisticRegression()

elif model_name =='KNeighborsClassifier':

model = KNeighborsClassifier(n_neighbors=4)

elif model_name == 'XGBClassifier':

model = XGBClassifier(objective="binary:logistic",eval_metric="auc")

else:

print('not a valid model name')

model=model.fit(train_X,train_Y)

pred_prob=model.predict_proba(test_X)

fpr, tpr, thresh = roc_curve(test_Y, pred_prob[:,1], pos_label=1)

model_predict= model.predict(test_X)

acc=accuracy_score(model_predict,test_Y)

print("Accuracy: ",acc)

# Classification report

print("Classification Report: ")

print(classification_report(model_predict,test_Y))

#print("Confusion Matrix for", model_name)

con = confusion_matrix(model_predict,test_Y)

sns.heatmap(con,annot=True, fmt=".2f")

plt.suptitle('Confusion Matrix for '+model_name, x=0.44, y=1.0, ha="center", fontsize=25)

plt.xlabel('Predict Values', fontsize =25)

plt.ylabel('Test Values', fontsize =25)

plt.show()

return model, acc, fpr, tpr, thresh

After building all the models, use heatmaps to see the confusion matrix of each model and its performance.

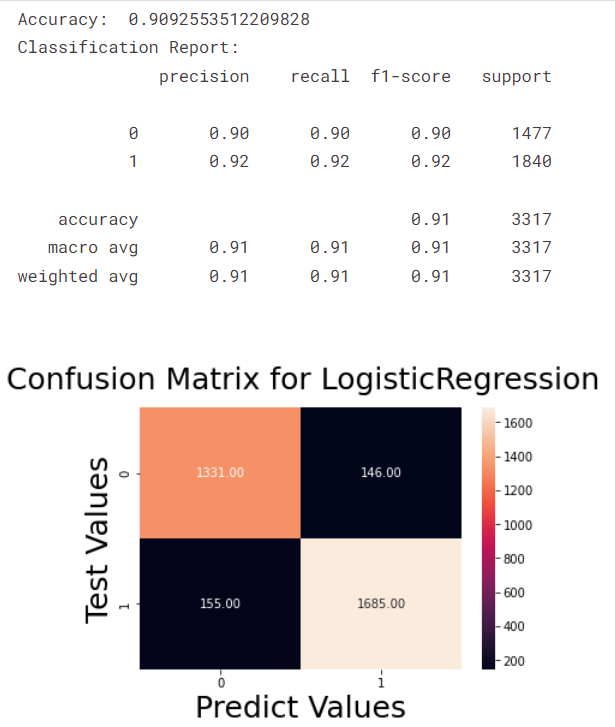

Model 1 – Logistic Regression

Logistic regression is a supervised method. Logistic regression is used to calculate or predict events. There are two conditions, yes or no. A classic example is if a person has a cold. Patients are either infectious or not. That’s the result. There are three types of logistic regression and one type. A binomial expression has two outcomes, 0 or 1, and either wins or loses. 2. A polynomial has more than one result. Categories of the target variable, such as test scores, are ordered. Scores are 0, 1, 2, and so on.

sauce: logistic regression

# Model 1 - LogisticRegression

lg_model,acc1, fpr1, tpr1, thresh1 = build_model('LogisticRegression',train_X, train_Y, test_X, test_Y.values.ravel())

sauce: logistic regression

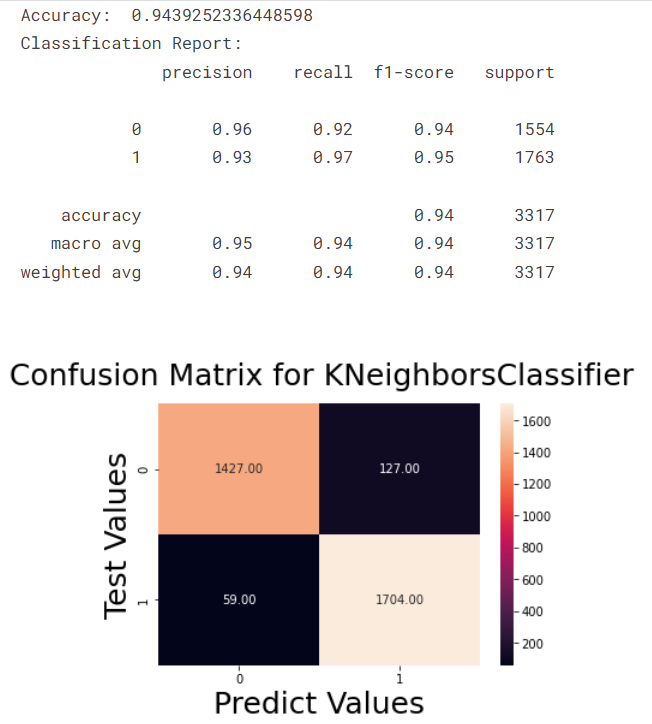

Model 2 – KNeighbors classifier

This is a supervised method typically used for classification and regression problems. Used for resampling. New data points predict classes or continuous values. KNeighbors are lazy machine learning models.

Source: KNeighbors

# Model 2 - KNeighborsClassifier

knn_model,acc2, fpr2, tpr2, thresh2 = build_model('KNeighborsClassifier',train_X, train_Y, test_X, test_Y.values.ravel())

Source: KNeighbors

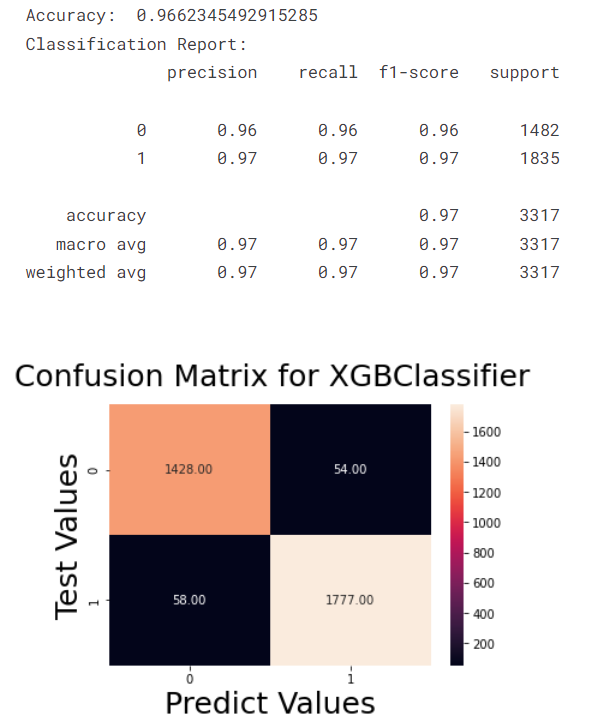

Model 3 – XGB classifier

It is a type of machine learning that uses distributed decision trees in ascending order. XGB offers parallel tree boosting. Boosting techniques are used in XGB to build powerful classifiers.

# Model 3 - XGBClassifier

xgb_model, acc3, fpr3, tpr3, thresh3 = build_model('XGBClassifier',train_X, train_Y, test_X, test_Y.values.ravel())

The confusion matrix above shows that the XGB classification has the highest accuracy.

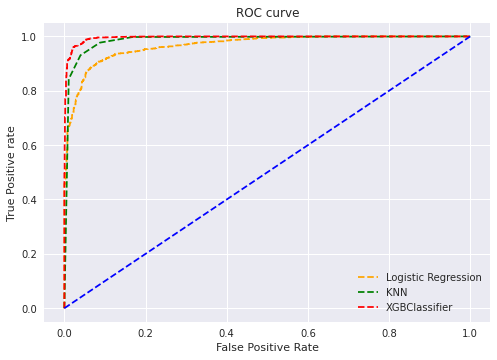

To demonstrate the diagnostic power of this classifier, we need to plot the ROC curve.

# roc curve for tpr = fpr random_probs = [0 for i in range(len(test_Y))] p_fpr, p_tpr, _ = roc_curve(test_Y, random_probs, pos_label=1)

Plot ROC curve

plt.style.use('seaborn')

# plot roc curves

plt.plot(fpr1, tpr1, linestyle="--",color="orange", label="Logistic Regression")

plt.plot(fpr2, tpr2, linestyle="--",color="green", label="KNN")

plt.plot(fpr3, tpr3, linestyle="--",color="red", label="XGBClassifier")

plt.plot(p_fpr, p_tpr, linestyle="--", color="blue")

# title

plt.title('ROC curve')

# x label

plt.xlabel('False Positive Rate')

# y label

plt.ylabel('True Positive rate')

plt.legend(loc="best")

plt.savefig('ROC',dpi=300)

plt.show()

Shown in the ROC plot is the XGBClassifier. Higher (TPR) true positive rates compared to other models.

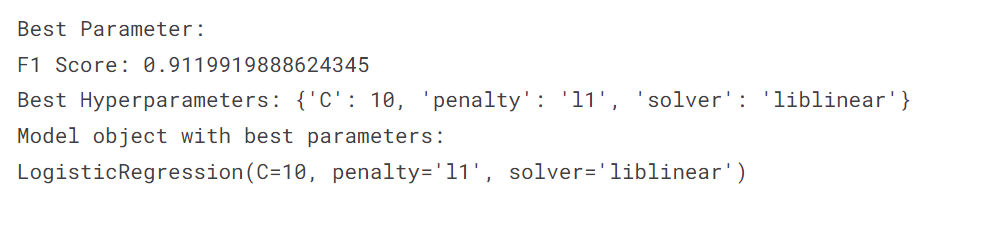

K-fold cross-validation should be used to verify the accuracy of the plotted data. We use GridSearchCV to find the best parameters for different models and the StratifiedKFold cross-validation technique to find the accuracy of the data.

liblinear and newton-CG solvers and l1, l2 penalties for logistic regression model evaluation

For logistic regression, 91% 4 StratifiedKFold Accuracy in folds.

import warnings

warnings.filterwarnings("ignore")

# Create the parameter grid based on the results of random search

param_grid = {

'solver':['liblinear','newton-cg'],

'C': [0.01,0.1,1,10,100],

'penalty': ["l1","l2"]

}

# Instantiate the grid search model

grid_search = GridSearchCV(estimator = LogisticRegression() , param_grid = param_grid,

cv = StratifiedKFold(4), n_jobs = -1, verbose = 1, scoring = 'accuracy' )

grid_search.fit(train_X,train_Y.values.ravel())

print('Best Parameter:')

print('F1 Score:', grid_search.best_score_)

print('Best Hyperparameters:', grid_search.best_params_)

print('Model object with best parameters:')

print(grid_search.best_estimator_)

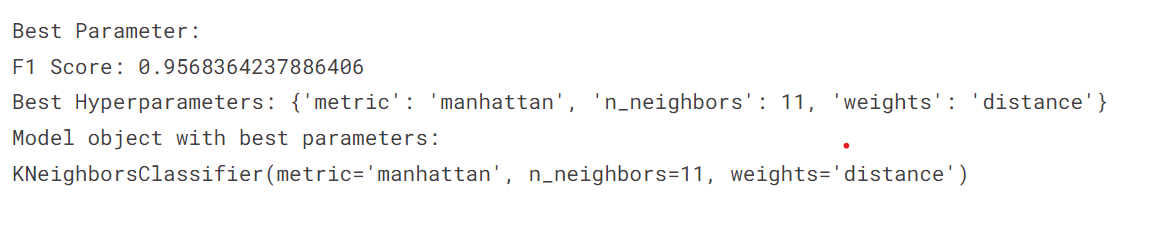

GridSearchCV method for KNeighborsClassifier model evaluation

For the KNeighbors classifier, 95% 3 Accuracy in GridSearchCV fold.

grid_params = {

'n_neighbors':[3,4,11,19],

'weights':['uniform','distance'],

'metric':['euclidean','manhattan']

}

gs= GridSearchCV(

KNeighborsClassifier(),

grid_params,

verbose=1,

cv=3,

n_jobs=-1

)

gs_results = gs.fit(train_X,train_Y.values.ravel())

print('Best Parameter:')

print('F1 Score:', gs_results.best_score_)

print('Best Hyperparameters:', gs_results.best_params_)

print('Model object with best parameters:')

print(gs_results.best_estimator_)

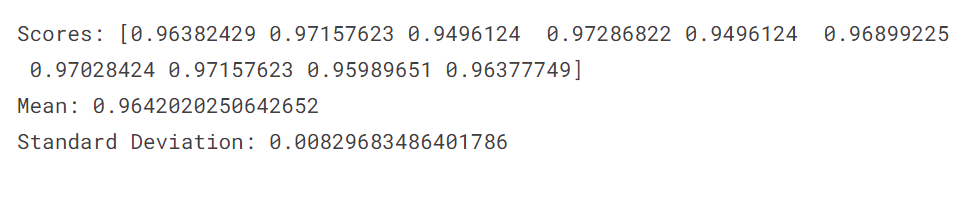

KFold cross-validation method for XGBClassifier model

For the XGB Classifier, 96% 10x more accurate.

xgb_cv = XGBClassifier(n_estimators=100,objective="binary:logistic",eval_metric="auc")

scores = cross_val_score(xgb_cv, train_X, train_Y.values.ravel(), cv=10, scoring = "accuracy")

print("Scores:", scores)

print("Mean:", scores.mean())

print("Standard Deviation:", scores.std())

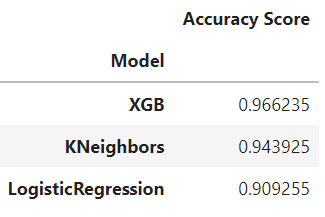

model comparison

The final output of the model will have maximum accuracy on the validation dataset with the selected attributes. The output of the code below shows that XGBoost is the winner and a good model for Ads URL analysis.

results=pd.DataFrame({'Model':['LogisticRegression','KNeighbors','XGB'],

'Accuracy Score':[acc1,acc2,acc3]})

result_df=results.sort_values(by='Accuracy Score', ascending=False)

result_df=result_df.set_index('Model')

result_df

Comparison table

Conclusion

The implementation above helps identify a suitable machine learning model for the ad URL. The underlying structure of the data was analyzed with exploratory data analysis. You’ve used histograms and heat maps to understand the features of your dataset. We collected accuracy metrics for each model and created a comparison table showing the overall accuracy of each model. The XGBoost model is the winner of the three machine learning models with 96% accuracy. KNeighbors earned his 94% score. Logistic regression was 90%.

Important points –

- The KNeighbors model was slower than the other two because it only outputs labels.

- Logistic regression was not flexible enough to capture more complex relationships.

- The other two models were slower than the XGB model.

- XGB has the ability to handle both continuous and categorical data.

- The above implementation makes the XGBoost model suitable for the Book-My-Show problem.

We hope the above implementation will help you in choosing a machine learning model.

Media shown in this article are not owned by Analytics Vidhya and are used at the author’s discretion.